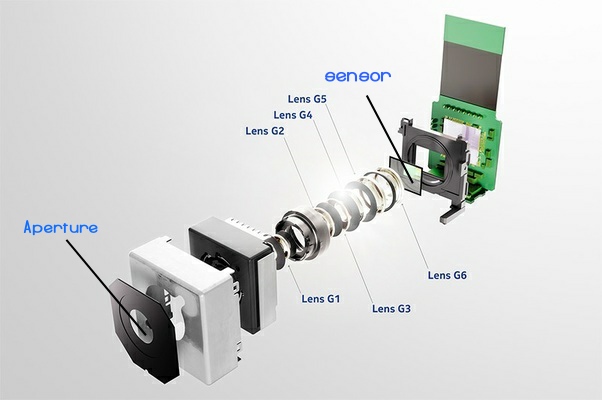

Smartphones have replaced a lot of things in our arsenal, and almost everyone who desired a full-fledged camera can still do a lot with your pokemon in your pocket. We are going to explain the tiny hole or holes behind your phone and how smartphone cameras work? Cameras require a few things to capture time in visual memories. The smartphone camera sensor is the most important part, followed by the lens or lenses.

Computational photography

It’s like an autopilot functionality for your camera sensor controlled by an algorithm. You are selecting what to shoot, but the phone camera does not just click one picture. In fact, some phones like the Google Pixel series, Samsung flagships, and even the iPhone capture footage from all the sensors while clicking a single photo or video. It is post-processing that really matters more than the number of cameras. But today’s computational algorithms and processors are competent enough to use more cameras for more information and better end-user content.

Understand that each manufacturer designs this according to their test conditions. Thus a picture looks different on different phones. Do not be fooled by how the photo looks on your smartphone, especially editing images. It may look different to someone viewing the same picture on a SAMOLED vs IPS panel.

GCam for Android phones is Google Pixel’s open-source algorithm that can be enhanced for pixel-like image processing on most phones. Try it out by searching on the internet; it will also drastically improve image processing on your phone. It’s a mix of 50-50% hardware and software.

Does size matter?

Have you ever considered that a Redmi phone with 108MP still fails to compete against the same shot taken by a 12MP iPhone camera? Does the megapixel size even matter? Short answer – Nope, it is the algorithm that matters, and as we all have studied, the software runs better on better hardware, so the main SOC (system on chip) also matters. More processing power equals better image processing and finer details retained. If you enjoy colour grading or post-processing, most phones allow for RAW image capture, but most of us do not like such large image files on limited storage, do we?

Pixel binning

Pixel binning made things better. Pixel binning technology is when adjacent pixels are grouped or binned together to form one super-pixel with the information of neighbouring pixels, making it easier to process the image faster while also improving the low light performance of your smartphone. In 2022, almost every phone does pixel binning. Better phones just do it better.

OIS vs EIS

OIS is optical image stabilization, while EIS is electronic image stabilization. OIS shifts the lens to compensate for shaky hands or blurry focus, while EIS shifts through captured images digitally to reduce the blur. OIS is a hardware-level mechanism which involves physical moving parts on your lens, while EIS is a software algorithm. Most phones without OIS do EIS processing; some can do both simultaneously to improve camera performance.

What’s the suitable FPS?

There is no proper FPS to shoot, but a normal high-definition standard (normal-time) video is shot in 60FPS as of this era. FPS is frames per second of data captured by your sensor, and the more you have while shooting a video, the better image, video and audio data you have for post-processing. Do understand that FPS drastically impact the file size of any video recording. 4K and higher video recording strain your phone processor, and thus some phones get hot when shooting 4K videos for too long or limit 4K video recordings with a set time. Fun fact – Any camera over 8MP can theoretically shoot at 4K resolution.

What’s with the 2MP depth and 5M telephoto?

Yes, you guessed it right, most of these smaller cameras are a part of the overall picture algorithm and have been designed as a data collection task. Most of our main smartphone cameras are high resolution enough to do both normal and telephotos by cropping the main image digitally. In the same way, most ultra-wide sensors can also double up as excellent macro cameras. Thus, when the low-end hardware is not used as a primary camera, it acts as a secondary camera capturing essential data to improve the overall image quality. Most manufacturers will not tell you, and that’s fine with us as long as the final image or video looks good.

AI and ML

Artificial intelligence and machine learning have come a long way. Today, they are solely responsible for your smartphones’ incredible low-light images and beautiful cinematography. Not only do these algorithms act seamlessly in the background to make image processing an instant task but they also learn and improve with updates. The best AI and ML algorithms are researched by the smartphones makers and thus results vary as per different phones.

Explained – Smartphone cameras

Now that you know how most smartphone cameras work, welcome to the knowledgable side. Thanks to physical size restraints and minima moving parts, smartphone cameras have evolved using various software black magic fuckery to improve your photos and videos. And just like most technology, when the top dogs are rewarded, the reward trickles to lower price brackets with time. Who knows what future technology will bring to the table? All we hope are timeless memories become crisp, clear, dynamic and inordinate with time.

Smartphones have replaced a lot of things in our arsenal, and almost everyone who desired a full-fledged camera can still do a lot with your pokemon in your pocket. We are going to explain the tiny hole or holes behind your phone and how smartphone cameras work? Cameras require a few things to capture time in visual memories. The smartphone camera sensor is the most important part, followed by the lens or lenses. Who knows what future technology will bring to the table? All we hope are timeless memories become crisp, clear, dynamic and inordinate with time. Learn how smartphone cameras work and subscribe for easy learning.